How To Add Your Sitemap To Your Robots.txt File

This blog post was updated 11 May 2020

If you are a member of a marketing team or a website developer, you will want your site to be seen in search results. And in order to be shown in search results you need your website and its various web pages crawled and indexed by search engine bots (robots).

There are two different files on the technical side of your website that helps these bots find what they need: Robots.txt and XML sitemap."

Robots.txt

The Robots.txt file is a simple text file that is placed on your site's root directory. This file uses a set of instructions to tell search engine robots which pages on your website they can and cannot crawl.

The robots.txt file can also be used to block specific robots from accessing the website. For example, if a website is in development, it may make sense to block robots from having access until it's ready to be launched.

Learn all about robots.txt

Read our guide to robots.txt and SEO.

The robots.txt file is usually the first place crawlers visit when accessing a website. Even if you want all robots to have access to every page on your website, it's still good practice to add a robots.txt file that allows this.

Robots.txt files should also include the location of another very important file: the XML Sitemap. This provides details of every page on your website that you want search engines to discover.

In this post, we are going to show you how and where you should reference the XML sitemap in the robots.txt file. But before that, let's look at what a sitemap is and why is it important.

XML Sitemaps

An XML sitemap is an XML file that contains a list of all pages on a website that you want robots to discover and access.

For example, you may want search engines to access all of your blog posts, in order for them to appear in the search results. However, you might not want them to have access to your tag pages, since these may not make good landing pages and should therefore not be included in the search results.

Learn all about XML Sitemaps

Read our guide to XML sitemaps.

XML sitemaps can also contain additional information about each URL, in the form of meta data. And just like robots.txt, an XML sitemap is a must-have. It's not only important to make sure search engine bots can discover all of your pages, but also to help them understand the importance of your pages.

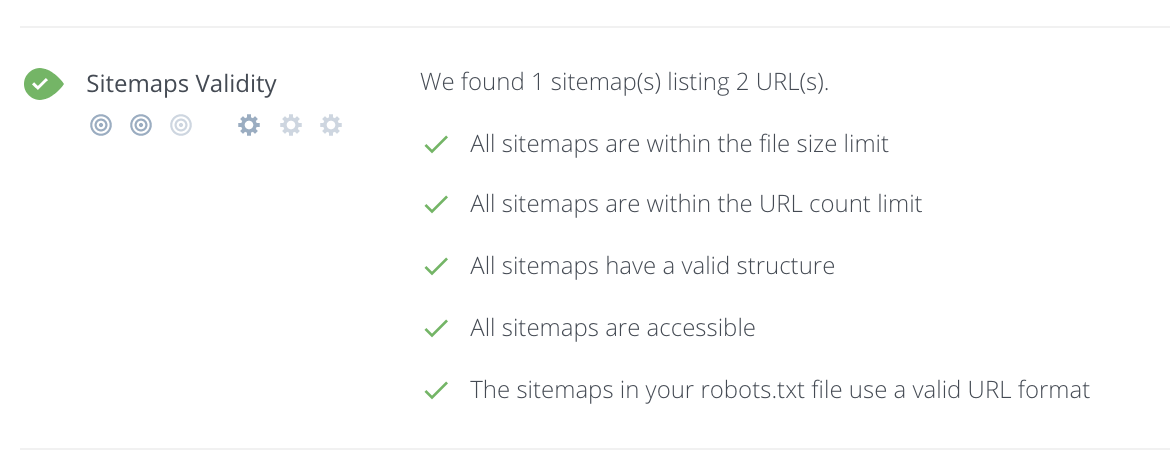

You can check your sitemap has been setup correctly by running a Free SEO Audit.

How Are robots.txt & Sitemaps Related?

Back in 2006, Yahoo, Microsoft and Google united to support the standardized protocol of submitting a websites pages via XML sitemaps. You were required to submit your XML sitemaps through Google Search Console, Bing webmaster tools and Yahoo, while some other search engines such as DuckDuckGoGo uses results from Bing/Yahoo.

After about six months, in April 2007, they joined in support of a system to check for XML sitemaps via robots.txt, known as Sitemaps Autodiscovery.

This meant that even if you did not submit the sitemap to individual search engines, it was OK. They would find the sitemap location from your site's robots.txt file first.

(NOTE: Sitemap submission is still available through most search engines, but don't forget, Google & Bing aren't the only search engines!)

And hence, the robots.txt file became even more significant for webmasters because they can easily pave way for search engine robots to discover all the pages on their website.

How To Add Your XML Sitemap To Your Robots.txt File

Here are three simple steps to adding the location of your XML sitemap to your robots.txt file:

Step #1: Locate Your Sitemap URL

If your website has been developed by a third-party developer, you need to first check if they provided your site with an XML sitemap.

By default, the URL of your sitemap will be /sitemap.xml. For example, the xml sitemap for https://befound.pt is

https://befound.pt/sitemap.xml

So type this URL in your browser with your domain in place of 'befound.pt'.

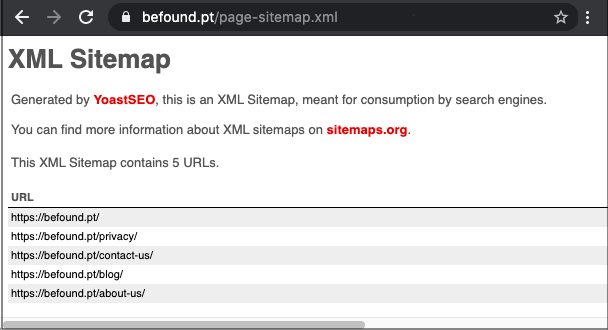

Some websites have more than one XML sitemap, which requires a sitemap for sitemaps (known as a sitemap index). For example, if you're using the Yoast SEO plugin with WordPress, a sitemap index will automatically be added to /sitemap_index.xml.

https://befound.pt/sitemap_index.xml

You may also be able to locate your sitemap via Google search by using search operators as shown in examples below:

site:befound.pt filetype:xml

OR

filetype:xml site:befound.pt inurl:sitemap

But this will only work if your site is already crawled and indexed by Google.

If you have access to your website's File Manager, you can search for your xml sitemap file.

If you do not find a sitemap on your website, you can create one yourself. There are lots of tools to help with this, including XML Sitemap generator which is free for up to 500 pages, but you will need to manually remove any pages you don't want to be included. Alternatively, follow the protocol explained at Sitemaps.org.

Step #2: Locate Your Robots.txt File

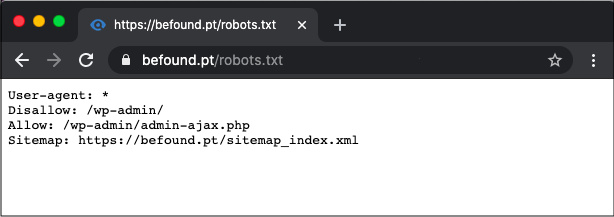

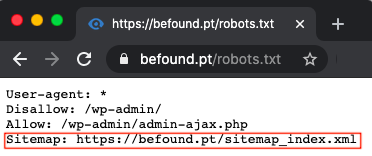

You can check whether your website has a robots.txt file by typing /robots.txt after your domain) for example, https://befound.pt/robots.txt.

If you do not have a robots.txt file then you will have to create one and add it to the root directory of your web server. To do this, you will need access to your web server. Usually, it is put in the same place where your site's main "index.html" lies. The location of these files depends on the kind of web server software you have. You should consider getting the help of a web developer if you are not well accustomed to these files.

Just remember to use all lower case for the file name that contains your robots.txt content. Do not use Robots.TXT or Robots.Txt as your filename.

Step #3: Add Sitemap Location To Robots.txt File

Now, open up robots.txt at the root of your site. Again, you need access to your web server to do so. So, ask a web developer or your hosting company for directions if you don't know how to locate and edit your website's robots.txt file.

To facilitate auto-discovery of your sitemap file through your robots.txt, all you have to do is place a directive with the URL in your robots.txt, as shown in the sample below:

Sitemap: http://befound.pt/sitemap.xml

So, the robots.txt file looks like this:

Sitemap: http://befound.pt/sitemap.xml User-agent:* Disallow:

NOTE: The directive containing the sitemap location can be placed anywhere in the robots.txt file. It is independent of the user-agent line, so it does not matter where it is placed.

You can see this looks in action on a live site by visiting your favorite website, adding /robots.txt to the end of the domain. For example, https://befound.pt/robots.txt.

What If You Have Multiple Sitemaps?

Based on Google & Bing's sitemap guidelines, XML sitemaps shouldn't contain more than 50,000 URLs and should be no larger than 50Mb when uncompressed. So in case of a larger site with many URLs, you can create multiple sitemap files.

You must list all sitemap file locations in a sitemap index file. The XML format of the sitemap index file is similar to the sitemap file, making it a sitemap of sitemaps.

When you have multiple sitemaps, you can either specify your sitemap index file URL in your robots.txt file as shown in the example below:

Sitemap: http://befound.pt/sitemap_index.xml

Or, you can specify individual URLs for each of your sitemap files, as shown in the example below:

Sitemap: http://befound.pt/sitemap_pages.xml Sitemap: http://befound.pt/sitemap_posts.xml

Hopefully you're now clear on how to create a robots.txt file with a sitemap location. Do it, it will help your website!

Have you located your sitemap in your robots.txt file yet?

Read our Customer Testimonials to see exactly how WooRank has helped their SEO projects.